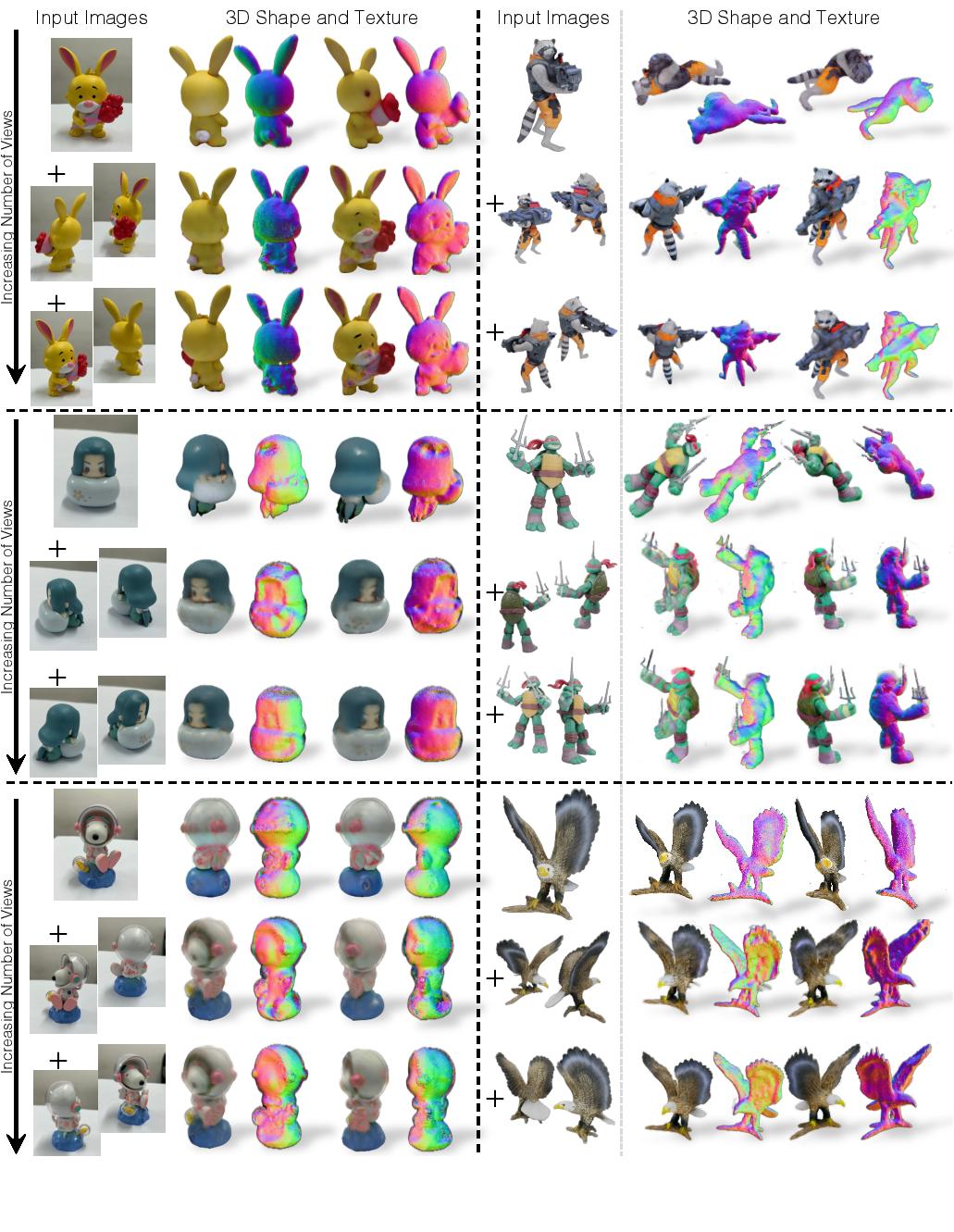

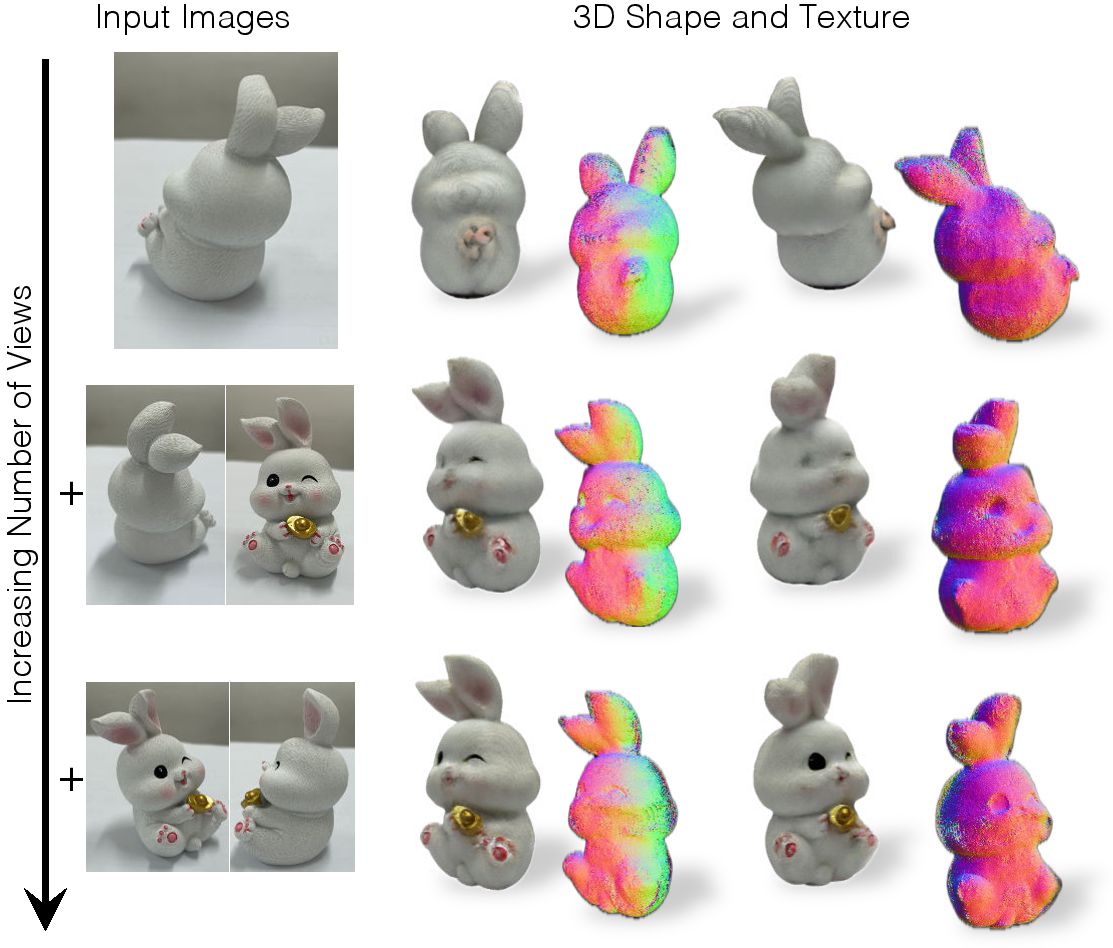

3D from one or more unposed views. Our system reconstructs the 3D shape and texture of an object with a variable number of real input images. The first, second, and third rows show reconstructions from 1, 3, and 5 input images. The quality of 3D shape and texture improves with more views.